Why Face → Health Exists

The face is treated as a sensor, not a narrative. Face → Health does not diagnose or infer emotion; it evaluates weak visual signals only through longitudinal correlation. The system supports two aligned modes: retrospective (face today → last night’s sleep) and predictive (face today → tonight’s sleep). Together, they provide both diagnostic context and forward‑looking insight.

What’s Implemented in Production

Health Export Faces

Daily face images are detected and stored with strict date normalization. Each image receives a health‑specific vision analysis focused on alertness, tension, hydration, and stress markers.

Dual Embeddings

Every face is embedded twice: CLIP embeddings for similarity search and 128D face embeddings for identity‑specific retrieval.

Health Linkage

A dedicated health record date links every face to Apple Health daily records, powering a materialized view for ML and correlation analysis.

Temporal Alignment (Critical)

Both modes are supported with explicit alignment. No inferred dates, no silent shifts. This keeps the dataset defensible as it grows.

Machine Learning Direction

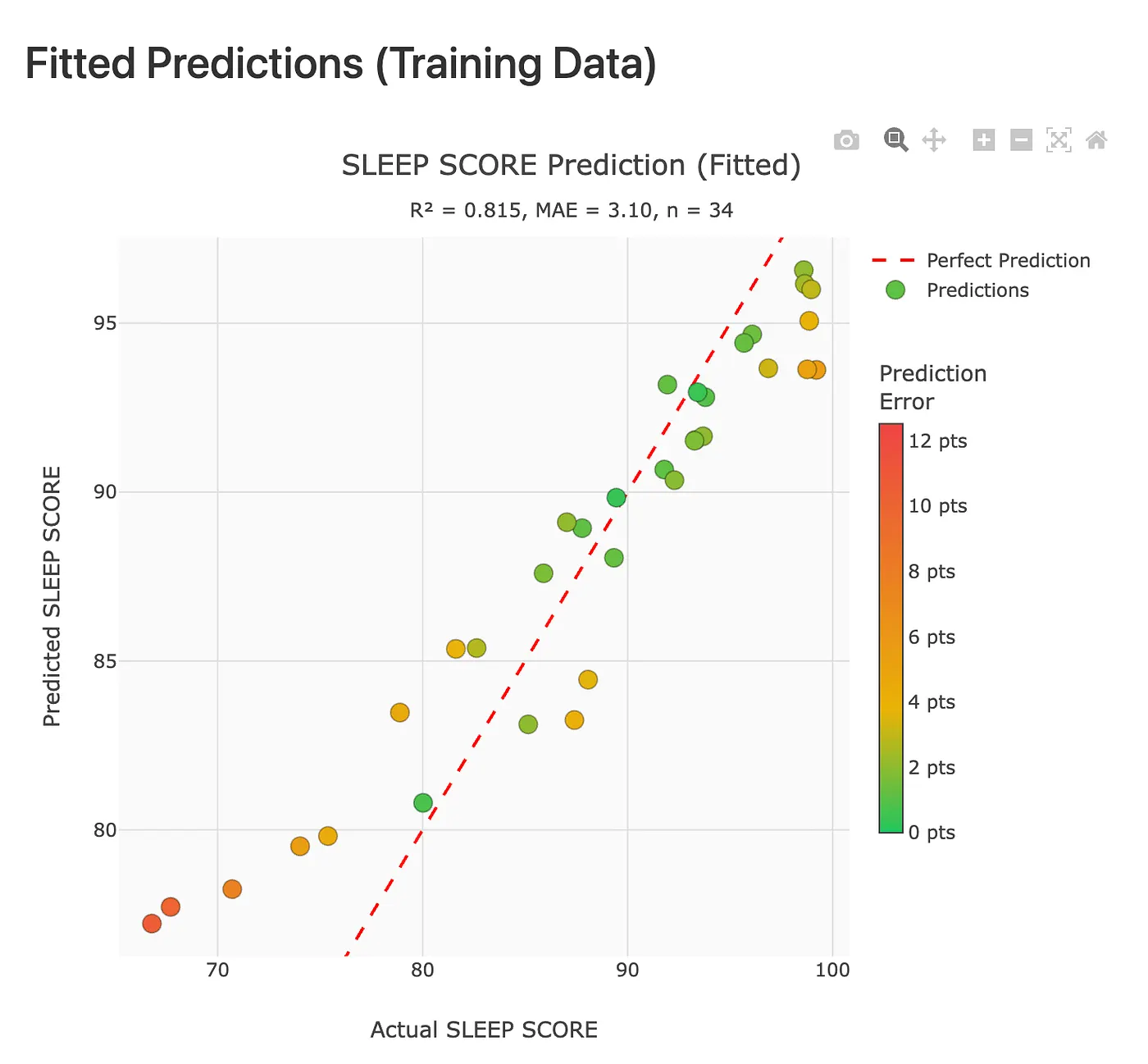

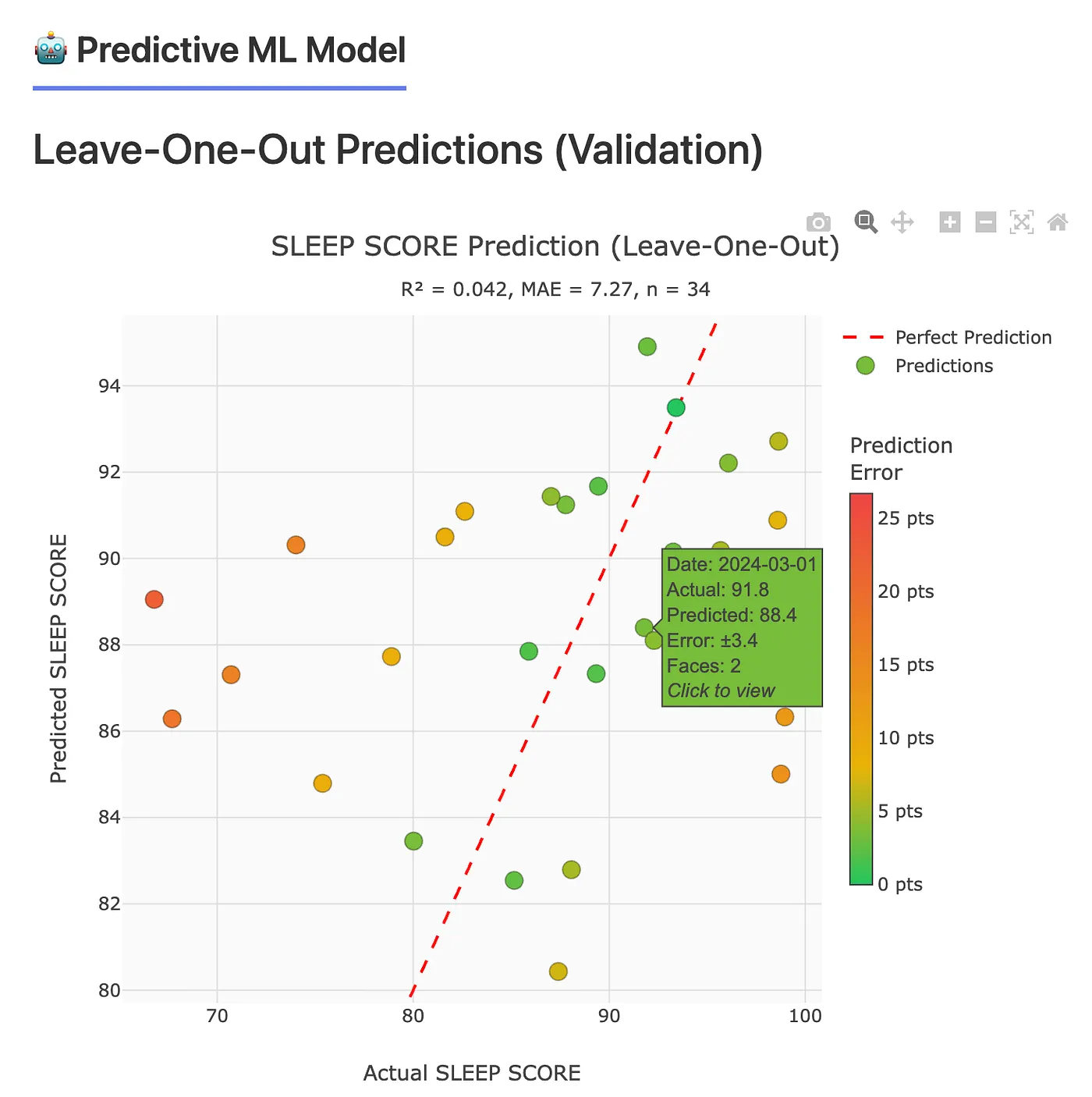

Two complementary models run in parallel. Retrospective explains current appearance using last night’s sleep. Predictive estimates tonight’s sleep from today’s face image and same‑day activity metrics. Early models prioritize interpretability and temporal integrity over raw accuracy.

Retrospective (Diagnostic)

Face today → last night’s sleep. Useful for explaining current appearance and recovery signals.

Predictive (Forecast)

Face today → tonight’s sleep. Uses same‑day activity + embeddings with next‑day labels.

Generalization First

Early results show training fit > validation; the goal is clean data and defensible generalization.

Correlation is always reported as correlation. Causation is never implied.